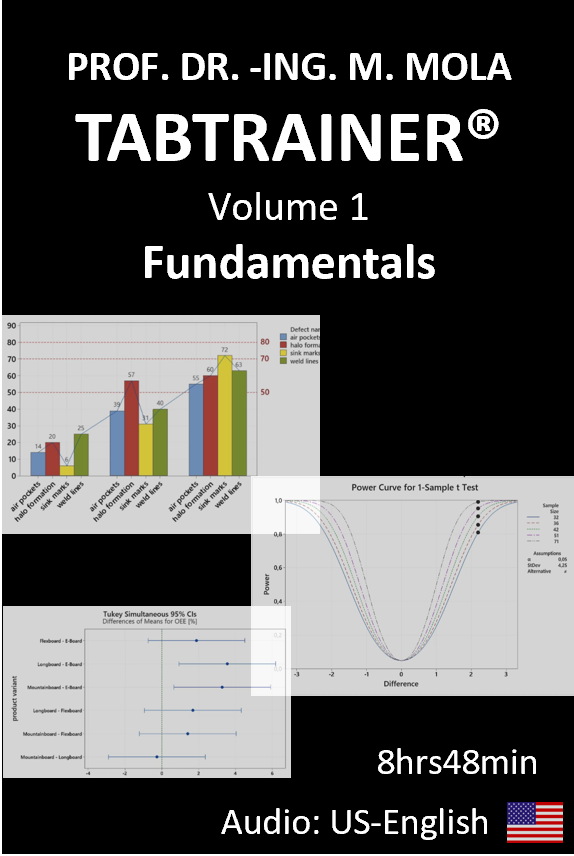

Minitab® Tutorial - TABTRAINER® VOLUME 1: FUNDAMENTALS €129,99

Click here to view all payment options!

- AUDIO LANGUAGE: US-English/ AUDIO-SPRACHE: US-Englisch

- NO RISK: 60-DAY MONEY-BACK GUARANTEE!

- NO ON-GOING FEES – ONE-TIME PAYMENT

- LIFELONG AND UNLIMITED TABTRAINER® 24/7 ONLINE ACCESS VIA PC, ANDROID, IOS.

- FREE UPDATES TO DATA SETS AND EXERCISES.

- NUMEROUS MINITAB®/ EXCEL® DATASETS FOR DOWNLOAD.

The TABTRAINER® Invest is credited 100% as part of a TÜV CERT® SIX SIGMA PRO training course booked with Prof. Dr Mola.

Contact for volume discounts: info@sixsigmapro.de

- 01 MINITAB® USER INTERFACE

- 02 TIME SERIES ANALYSIS

- 03 BOXPLOT ANALYSIS

- 04 PARETO ANALYSIS

- 05 t-TEST, 1-SAMPLE, PART 1

- 05 t-TEST, 1-SAMPLE, PART 2

- 05 t-TEST, 1-SAMPLE, PART 3

- 06 t-TEST- 2 SAMPLES

- 07 t-TEST, PAIRED SAMPLES

- 08 TEST FOR PROPORTIONS, BINOMIAL DISTRIBUTION

- 09 CHI-SQUARE TEST FOR PROPORTIONS

- 10 ONE-SAMPLE POISSON RATE

- 11 ONE-WAY ANALYSIS OF VARIANCE (ANOVA)

- 12 GENERAL LINEAR MODEL: 2-WAY ANOVA

- 13 BLOCKED ANOVA

01 MINITAB® USER INTERFACE

In our 1st Minitab tutorial we will first familiarize ourselves with the Minitab user interface before we get into the actual data analyses, and experience how we can import, export and securely save Minitab file types. Once we have familiarized ourselves with the interface areas such as navigation, output and history, we will use a typical Minitab data set to see how a typical Minitab project can be structured. We will also take a closer look at the structure of the worksheets and learn how data worksheets are structured and understand how different data types are indexed in the worksheets. We will also learn how to integrate our own comments and text notes into the worksheets.

MAIN TOPICS

- Working in the navigation-, output-, and history window

- Data import from different sources

- Set specific default storage locations for projects

- Indexing of data types

- Customize surface view

- Add comments and notes in text form

- Save and close worksheets and projects

Trailer

02 TIME SERIES ANALYSIS

In the second Minitab tutorial, we will accompany the quality management team of Smartboard Company, and experience how the scrap rate of the last financial years is analyzed step by step with the help of a time series analysis. In this Minitab tutorial we will experience how data preparation and analysis is carried out by using the example of time series plots and get to know the different types of time series charts. We will learn about the useful timestamp function, and how to extract subsets from a worksheet. With the so-called useful brush function, we will know how to specifically analyze individual data clusters in the edit mode of a graph. The useful calendar function, will also be covered as part of our data preparation. We will also understand, what so-called identification variables are, and what benefits they have for our data analysis. We will see for example, how we can export our analysis results to PowerPoint or Word for team meetings with little effort.

MAIN TOPICS Minitab Tutorial 02

-

- Time series plot, fundamentals

- Row and column capacity of Minitab worksheets

- Interpret import preview window

- Update file names

- Extract dates

- Design and structure of time series plots

- Working with the timestamp

- Extracting data

- Form subset of worksheets

- Extract weekdays from dates

- Highlighting data points in the edit mode of a graphic

- Examination of data clusters using the brush function

- Defining identification variables

- Export of Minitab analysis results to MS Office applications

📥 Download:

Trailer

03 BOXPLOT ANALYSIS

03 BOXPLOT ANALYSIS

In the third Minitab tutorial we will accompany the quality improvement project of Smartboard Company, to analyze the scrap rate of the last fiscal year’s by using a boxplot analysis. In this Minitab tutorial, we will understand how the team compares different data groups to find out for example, whether more or less scrap was generated on certain production days, than on the other production days. In this context, we will learn what a boxplot is, how it is structured in principle, and what useful information this tool provides. In this Minitab tutorial, we will also discover that the particular advantage of a boxplot analysis is, that this graphical form of presentation allows us to compare statistical parameters such as median, arithmetic mean values, and minimum and maximum values of different data sets, in a compact and clear way. We will also learn how to include additional information elements into the data display, carry out a hypothesis test for data outliers, and create and interpret a single value diagram. The automation of recurring analyses by using of so-called macros, which is particularly useful in day-to-day business will also be introduced. Finally, we will create a personalized button in the menu bar, to perform recurring daily analysis routines – for example for the daily quality report – with a simple click in order to save time in the turbulent day-to-day business.

MAIN TOPICS MINITAB TUTORIAL 03

- Boxplot analysis, fundamentals

- Basic structure and interpretation of boxplots

- Quantiles, quartiles, medians and arithmetic means in boxplots

- Display of data outliers in the boxplot

- Boxplots for an even data set

- Boxplots for an odd data set

- Boxplot types in comparison

- Create and interpret boxplot

- Working in boxplot editing mode

- Hypothesis test for outliers according to Grubbs

- Generating and interpreting single value charts

- Automation of analyses with the help of macros

- Creating an individual button in the menu bar

Trailer

04 PARETO ANALYSIS

In the fourth Minitab tutorial, we will see how the quality team at Smartboard Company uses a Pareto analysis to examine its delivery performance. In this Minitab tutorial, we will first understand how a Pareto chart is basically structured, and what information it provides us with. As part of our Pareto analysis, we will then get to know a number of other useful options, for example to quickly retrieve dispersion and location parameters or how we can perform arithmetic operations using the calculator function. We will learn, how to generate a pie chart and a histogram, and also understand for example, how the class formation is calculated in the histogram. As part of the Pareto analysis, we will also learn, how to assign continuously scaled data to categorical value ranges, and how we can individually code these value ranges to create the Pareto diagram. In addition, we will also learn about data extraction by using corresponding function commands.

MAIN TOPICS MINITAB TUTORIAL 04

- Pareto analysis, fundamentals

- Retrieving and interpreting worksheet information

- Working with the calculator function

- Display of missing values in the data set

- Retrieving location and dispersion/ dispersion parameters using descriptive statistics

- Comparison of histogram types

- Creating and interpreting histograms and pie charts

- Calculation of the number and width of bars in the histogram

- Creating and interpreting a Pareto chart

- Creating specific value ranges in the Pareto chart

- Recoding of continuously scaled Pareto value ranges into categorical value ranges

- Targeted extraction of individual information from data cells

- Creation of Pareto diagrams based on different categorical variables

Trailer

05 t-TEST, 1-SAMPLE, PART 1

05 t-TEST, 1-SAMPLE

In the fifth Minitab tutorial, we will accompany the heat treatment process of the skateboard axles and see how the so-called hypothesis test, t-test for one sample, can be used to find out whether the heat treatment process is set so that the skateboard axles achieve the required compressive strength. To achieve this, the skateboard axles undergo a multi-stage heat treatment process. Our task in this Minitab tutorial is, to use sample data and the hypothesis test t-test for one sample, to make a reliable recommendation to the production management, as to whether the current heat treatment process is sufficiently well adjusted, or whether the current process might even need to be stopped and optimized if our hypothesis test shows, that the required mean target value is not being achieved. In the core of this Minitab tutorial, we will experience how a hypothesis test is properly carried out on a sample, and check in advance whether our data set follows the laws of normal distribution. With the help of a so-called discriminatory power analysis, we will work out whether the sample size is large enough. By using the so-called density function and probability distribution plot, we will learn how to classify the t-value in the t-test, and also understand which wrong decisions are possible in the context of a hypothesis test. With the help of corresponding individual value plot, we will develop an understand the so-called confidence interval, in the context of the one-sample t-test.

MAIN TOPICS MINITAB TUTORIAL 05, part 1

- t-test, one sample, fundamentals

- Retrieving and interpreting worksheet information

- Retrieving and interpreting descriptive statistics

- The discriminatory power of a hypothesis test

- Anderson- Darling test as a preliminary stage to the t-test

- Derivation of the probability plot based on the density function

- Interpretation of the probability plot

- Performance of the hypothesis test t-test for the mean value, 1 sample

- Establishing the null hypothesis and alternative hypothesis

- Test for normal distribution according to Anderson-Darling

- The probability plot of the normal distribution

MAIN TOPICS MINITAB TUTORIAL 05, part 2

- Generation and interpretation of individual value plot as part of the t-test

- Confidence level and probability of error

- Test sample size and significance value

- Type 1 error and type 2 error in the context of the hypothesis decision

MAIN TOPICS MINITAB TUTORIAL 05, part 3

- Power analysis and sample size in the t-test

- Influence of the sample size on the hypothesis result

- Graphical construction of the probability distribution

- Interpretation of the power curve

- Determination of the sample size based on the discrimination quality

- Influence of different sample sizes on the hypothesis decision

Trailer

05 t-TEST, 1-SAMPLE, PART 2

MAIN TOPICS MINITAB TUTORIAL 05, part 2

- Generation and interpretation of individual value plot as part of the t-test

- Confidence level and probability of error

- Test sample size and significance value

- Type 1 error and type 2 error in the context of the hypothesis decision

05 t-TEST, 1-SAMPLE, PART 3

MAIN TOPICS MINITAB TUTORIAL 05, part 3

- Power analysis and sample size in the t-test

- Influence of the sample size on the hypothesis result

- Graphical construction of the probability distribution

- Interpretation of the power curve

- Determination of the sample size based on the discrimination quality

- Influence of different sample sizes on the hypothesis decision

06 t-TEST- 2 SAMPLES

06 t-TEST-2 SAMPLES

In the 6th Minitab tutorial, we are in the product purchase department of Smartboard Company, and we will experience how the team uses the so-called, two-sample t-test, to evaluate the delivery quality of different screw suppliers. Smartboard Company previously purchased its screws from just one screw supplier. We need to know, that due to the current increase in demand, there have been repeated supply bottlenecks at the screw supplier, which has meant that skateboard production has had to be stopped due to a lack of screws. For this reason, Smartboard Company has recently started to be supplied by another screw manufacturer in addition to the previous supplier, in order to avoid future production bottlenecks. From a quality point of view, it is therefore very important that the mechanical properties of the screws from the new supplier, do not differ significantly from those of the previous supplier. Our task in this Minitab tutorial unit will be, to use the so-called, t-test for two samples, to work out whether there are significant differences in the strength properties between the two suppliers. We will also discuss a number of other useful functions and topics, as part of our two-sample t-test, as well as using dot plots and box plots, and learning about the useful „summary report“ function in this context. We will understand what is meant by the statistical quality parameters kurtosis and skewness, of a data landscape. We will carry out a variance test for two samples, in order to carry out a variance comparison of our data sets, in addition to the mean value comparison. For the variance test, we will familiarize ourselves with the Bonett and Levene procedures, in order to understand how to properly carry out the corresponding hypotheses in the variance test, and interpret them in an understandable way. Finally, we will get to know the so-called, layout tool, in order to summarize the most important analysis graphs and plots together, in one graphical layout.

MAIN TOPICS MINITAB TUTORIAL 06

- t-test, 2 samples

- Carrying out the discrimination power analysis to determine the sample size

- Basic mathematical idea of degrees of freedom

- Performance and interpretation of the t-test for two samples

- Formulation of the null hypothesis and alternative hypothesis

- Box plot „Multiple Y, simple“ as part of the t-test

- Rescaling of dot plots

- Creation and interpretation of boxplots of the t-test

- Working with the „Graphical summary“ option

- Quality parameters kurtosis and skewness of the data distribution

- Test for variances, 2 samples

- Significance values in the variance test according to Bonett & Levene

- Working with the „Layout Tool“

Trailer

07 t-TEST, PAIRED SAMPLES

07 t-TEST, PAIRED SAMPLES

In the 7th Minitab tutorial, we find ourselves in the development department of Smartboard Company. As part of the manufacture of prototypes, Smartboard Company has developed two new high-performance materials made of stainless-steel powder. The powder form is necessary because the skateboard axles for the professional sector are no longer to be manufactured from die-cast aluminum as before, but using the SLM production method. SLM stands for „selective laser melting“ and is currently one of the most innovative rapid prototyping processes. In this process the stainless-steel powder is completely remelted using a laser beam and applied in three-dimensional layers under computer control. The layer-by-layer application takes place in several cycles, with the next layer of powder being remelted and applied after each solidified layer until the 3D printing of the axis is complete. During prototype development, the team worked intensively on optimizing the stainless-steel powder used for 3D printing. Accordingly, our core task in this training session will be, to find out which of these two types of stainless-steel powder has the better i.e., higher toughness properties on the basis of a random sample, and a suitable hypothesis test. The core technological parameter of toughness is determined in the unit joule and is a measure of the resistance to axle breakage or crack spreading in the skateboard axles under impact load. According to the research director’s specifications, we are to draw an indirect conclusion about the production population on the basis of a random sample, and make a 95% reliable recommendation as to whether the average toughness’s differ from each other by at least 10 joules. In the course of this training session, we will guide the quality team in finding out which material has the better toughness properties for the skateboard axles by using the so-called hypothesis test, t-test for paired samples. And in the further course of this Minitab training, we will also experience that this time we are dealing with more than two production populations. We will learn why the paired-sample t-test is the method of choice in such cases rather than the classic two-sample t-test. We will take a closer look at the formula for the t-value in the paired sample t-test, calculate the most important parameters, and compare them with the values in the output window. In this context, we will again work with the useful calculator function to determine the relevant parameters. Finally, we will perform the t-test for paired samples by using the so-called Minitab Assistant, which is particularly useful in turbulent day-to-day business to perform the correct calculations with useful decision questions.

MAIN TOPICS MINITAB TUTORIAL 07

- t-test, paired samples

- Derivation of the test statistic in the t-test for paired samples

- Formulation of the null hypothesis and alternative hypothesis

- t-test, paired samples versus t-test, 2 samples

- Interpretation of the hypothesis test results

- Working with the calculator function

- Working with the „Minitab Assistant“

Trailer

08 TEST FOR PROPORTIONS, BINOMIAL DISTRIBUTION

[digi_if product=“tabtrainer-bundle-full-kit-edition-89049″]

08 TEST FOR PROPORTIONS, BINOMIAL DISTRIBUTION

08 TEST FOR PROPORTIONS, BINOMIAL DISTRIBUTION

In the 8th Minitab tutorial, we are in the skateboard deck production shop floor. Here in a fully automated production process, several layers of wood are pressed together under high pressure with water-based glue and special epoxy resin to form a skateboard deck. Our task in this Minitab tutorial will be to use the so-called hypothesis test, t-test for proportions, to draw a statistically indirect conclusion about the production population, in order to be able to make a statement about the error rate of the production population, with 95% certainty on the basis of the sample. For this purpose, we will subject a certain quantity of randomly selected skateboard decks to a visual surface inspection, and depending on their visual appearance, assess the skateboard decks as good or bad parts in terms of customer requirements. Good parts would be skateboard decks that meet all visual customer requirements, and correspondingly bad parts would be skateboard decks, that do not meet the visual customer requirements, and would either have to be subsequently repaired at great expense, or scrapped. Our task in the first step will be, to define the boundary conditions for the corresponding weekly sample, in order to draw an indirect conclusion in the second step, based on the error rate of our sample to the error rate in the population by using the correct hypothesis test. The special feature in this training unit will be, that we will be dealing with categorical quality judgments, in which the laws of binomial distribution rather than Gaussian normal distribution are applied. In this Minitab tutorial we will therefore get to know this binomial distribution in more detail, and also carry out the associated discriminatory power analysis for binomially distributed data, in order to determine the appropriate sample size. With this necessary preliminary work, we can then properly perform the so-called hypothesis test, test for proportions, in order to be able to make a 95% reliable recommendation for action to the management of Smartboard Company, based on our sample test results, which relates to the basic production population.

MAIN TOPICS MINITAB TUTORIAL 08

- 1-Sample t-test for proportions

- Understanding the binomial distribution

- Deriving the probability distribution of the binomial distribution

- Perform discriminatory power analysis for binomially distributed data

- Normal approximation in the context with the binomial distribution

- Working with the „tally individual variables“ function

- Sample size as a function of the discrimination quality

- Formulation of the null hypothesis and alternative hypothesis

[/digi_if]

Trailer

09 CHI-SQUARE TEST FOR PROPORTIONS

09 CHI-SQUARE TEST FOR PROPORTIONS

In the 9th Minitab tutorial, we are in the injection molding production of Smartboard Company for the production of skateboard wheels. Skateboard wheels are manufactured by using the injection molding process, for which the technical plastic, polyurethane is used. In the first step, the starting material in the form of polyurethane granulate is thermally liquefied in the injection molding system. In the second step, the liquid polyurethane is then injected into the corresponding mold at high pressure until the mold is completely filled. In the third step, the liquid polyurethane is cooled by high-pressure water cooling. After cooling and solidification, the finished skateboard wheels are automatically ejected from the injection mold in the fourth step, and the mold is released for the next wheel production. Large-scale injection molding production at Smartboard Company is carried out in three shifts, so that the required high quantities can be produced in early late and night shifts, and delivered to customers on time. For some time now however, an increasing number of skateboard wheels have had to be scrapped due to various surface defects. It was therefore decided to launch a quality improvement project, to identify the causes of the increased defect rates. Our central task in this Minitab tutorial will be, to answer the following two key questions on the basis of a sample: 1. Is there a fundamental correlation between the high defect rate, and the respective production shift. 2. are there certain defect types in the respective production shifts, that are generated significantly more frequently than other defect types. The special feature of this task is that we are dealing with more than two categories in which the laws of the so-called chi-square distribution apply. In this training unit, we will learn how to properly perform and interpret the corresponding hypothesis test for chi-square distributed data.

MAIN TOPICS MINITAB TUTORIAL 09

- Preparing the data using the „Recode to text“ function

- Counting variables using the „tally individual variables“ function

- Use of bar charts in the chi-square test

- Identify interaction effects using grouped bar charts

- User-specific bar charts as part of the chi-square test

- Hypothesis definition in the chi-square test

- Derivation of the chi-square distribution from a standard normal distribution

- Number of degrees of freedom in the chi-square test

- Interpretation of the “cross tabulation” function in the context of the chi-square test

- Pearson’s chi-square value and the likelihood ratio

Trailer

10 ONE-SAMPLE POISSON RATE

10 ONE-SAMPLE POISSON RATE

In the 10th Minitab tutorial, we are in the shockpad production of Smartboard Company. Shockpads are plastic plates, that are installed between the skateboard deck and the Axles. They are primarily used to absorb vibrations and shocks while riding. High-priced designer shockpads are currently being produced for a very discerning customer. The rectangular shockpads are manufactured in series production in a stamping process from high-priced polyurethane panels, specially produced for the customer. A punching batch always consists of 500 shockpads and corresponds to a delivery batch. In contrast to other customers, the customer also attaches great importance to the visual appearance of the shockpads. Therefore, the possible defects, scratches, cracks, uneven cut edges and punching cracks, in the punching process must be avoided. As according to the contractual customer-supplier agreement, surface defects are only permitted up to a certain number. Specifically, according to the contractual complaint’s agreement for Smartboard Company, there is a restriction, that each packaging unit consisting of 500 shockpads may contain a maximum total of 25 defects, the distribution of defects in the delivery is irrelevant. The basic defect rate per delivery, which must not be exceeded, is 5%. The central topic in this Minitab tutorial will therefore be to make a 95% reliable statement regarding the actual defect rate in the population of shockpad production on the basis of an existing sample data set. We will learn that hypothesis tests that follow the laws of the so-called, Poisson distribution, can be used in such cases. We will be able to distinguish the difference between total occurrences, and defect rate, and also become more familiar with the Poisson distribution using the associated density function, to gain insight into the normal approximation associated with the Poisson distribution. We will also learn about useful options such as the sum and tally functions. With the knowledge gained, we will then be able to properly perform the corresponding hypothesis test, on total occurrences of Poisson distributed data, in order to be able to make 95% confident statements, about the total occurrences or defect rates in the population of the punching process.

MAIN TOPICS MINITAB TUTORIAL 10

- Total Occurrences and defect rates of poisson distributed data

- Graphical derivation of the Poisson distribution

- Interpreting the probability density of the Poisson distribution

- Normal approximation in the context with the Poisson distribution

- Determine total occurrences in Poisson distribution

- Hypothesis definitions for poisson distributed data

- Working with the sum function in the context of the Poisson distribution

- Working with the function “tally individual variables” in the context of Poisson distribution

Trailer

11 ONE-WAY ANALYSIS OF VARIANCE (ANOVA)

11 ONE-WAY ANALYSIS OF VARIANCE (ANOVA)

The 11th Minitab tutorial is about the ball bearings used by Smartboard Company for skateboard wheels. An important criterion for the fit is the outer diameter of the ball bearings. Smartboard Company compares three new ball bearing suppliers for this purpose. This training session focuses on the question of, whether the outer diameters of the ball bearings from the three suppliers differ significantly from one another. We will see that the special feature of this task is, that we are now dealing with more than two processes, or more than two sample averages, and therefore the hypothesis tests we have learned so far will not help. Before we start with the actual analysis of variance – often abbreviated to the acronym ANOVA, we will first use descriptive statistics in this Minitab tutorial to get an overview of the location and dispersion parameters of our three supplier data sets. Before starting the actual analysis of variance, a discriminatory power analysis must be carried out to determine the appropriate sample size. In order to understand the principle of variance analysis. For didactic reasons we will first get to know the relatively complex variance analysis step by step on a generally small data set and then use this preliminary work and information to enter into the so-called one-way analysis of variance, which is often referred to as one-way ANOVA, in day-to-day business. So that we can use this analysis approach to determine the corresponding scatter proportions that make up the respective total scatter. We will take a closer look at the ratio of these scatter proportions by using the so-called F-distribution, in reference to its developer, Sir Ronald Fisher. We will learn how to use the F-distribution to determine the probability of a scattering ratio occurring, simply called the F-value. For a better understanding, we will also use the graphical method to derive the p-value for the respective F-value. In the final step, the associated hypothesis tests are used to properly determine whether there are significant differences between the ball bearing suppliers. Interesting and very useful in the context of this one-way analysis of variance, are the so-called grouping letters, generated with the help of the Fisher pairwise comparison test, which will always help us very quickly in our day-to-day business to recognize which ball bearing manufacturers differ significantly from each other.

MAIN TOPICS MINITAB TUTORIAL 11

- Setting up the hypothesis tests as part of the one-way ANOVA

- Adj SS and Adj MS values within the framework of the one-factorial ANOVA

- Derivation of the F-value within the framework of the one-factorial ANOVA

- Derivation of the F-distribution within the framework of the one-factorial ANOVA

- Error bar chart as part of the one-way ANOVA

- Pair difference test according to Fisher

- Interpretation of the grouping letters based on the Fisher LSD method

- Interpretation of the Fisher individual test for differences of mean

Trailer

12 GENERAL LINEAR MODEL: 2-WAY ANOVA

12 GENERAL LINEAR MODEL: 2-WAY ANOVA

In the 12th Minitab tutorial, we accompany the quality team at Smartboard Company as they examine the material strength of skateboard decks using the so-called 2-Way ANOVA. Incidentally North American maple wood is always used as the base material for high-quality skateboard decks, as this type of wood is particularly stable and resistant due to its slow growth. To produce the skateboard decks, two layers of maple wood are first pressed together under pressure with water-based glue, and a special epoxy resin mixture, in an automated laminating process. The connection of the first two layers of wood in the core, is particularly crucial for the cohesion of the entire laminated composite. The quality of this core lamination is tested randomly in a tensile shear test, in which the two laminated layers of wood are pulled apart, by applying a force parallel to the joint surface, until the laminate joint tears open. In principle, the higher the maximum tensile shear strength of the laminate joint achieved in the laboratory test, the better. In this Minitab tutorial, we will be dealing with two categorical factors, each of which is available in three categorical factor levels. The core objective of this training unit will be to draw a statistically indirect conclusion about the production population on the basis of a sample, as to whether the corresponding factors have a significant influence on the tensile shear strength. We will also analyze, whether there are any so-called interaction effects, between the influencing factors, which may indirectly influence each other, and thus also indirectly significantly influence our response variable tensile strength. However we need to carry out some data management in advance, as the structure of the measurement protocol makes it necessary to restructure the data first. The aim of data management is to get to know the very useful option, Stacking of column blocks. To get a first impression of the trends and tendencies of our data, we will then work with boxplots before starting with variance analysis, 2-way ANOVA. Well prepared, we will then move on to the actual 2-Way analysis of variance in order to assess the significance of the trends and tendencies identified in the boxplots. In this context, we will also get to know the very useful main effects- and interaction plots. And learn how to interpret main and interaction diagrams. Finally, we will be able to use the so-called Tukey’s significance test, and the associated grouping letters, to work out which of the parameter constellations can actually be declared as significant.

MAIN TOPICS MINITAB TUTORIAL 12

- 2-Way ANOVA, fundamentals

- Data management in the preview window for data import

- Stacking of column blocks within the framework of ANOVA

- Boxplot analysis within the framework of ANOVA

- Adjust interquartile ranges graphically

- Include reference lines as part of the boxplot analysis

- Definition of the „general linear model (ALM, GLM)“

- Interpretation and evaluation of the variance and residual analysis

- Working with the „marking palette“ in the context of residual analysis

- Interpretation of the ANOVA model quality

- Working with the histogram in the context of residual analysis

- Factor diagrams in the context of ANOVA

- Interpretation and editing of interaction diagrams

- Tukey’s pairwise comparisons test

Trailer

13 BLOCKED ANOVA

13 BLOCKED ANOVA

In the 13th Minitab tutorial, we find ourselves in the maintenance and servicing department of Smartboard Company. This department is responsible for all improvement measures to ensure the best possible process availability, process efficiency, and quality output, throughout the entire skateboard production process. In order to be able to evaluate the process availability, process efficiency and quality output of skateboard production with just one key figure, Smartboard Company uses the industry-proven key performance indicator, O.E.E., as a measure of overall equipment effectiveness. The higher the O.E.E. figure, the better the overall equipment effectiveness of skateboard production. The maintenance and servicing department has identified performance fluctuations in skateboard production, based on the O.E.E. indicator, and suspects that these fluctuations may be due to the different product variants. The aim of this Minitab tutorial is to find out, whether the different product variants, such as longboard, e-board, or mountainboard, have a statistically significant influence on the overall equipment effectiveness O.E.E. The so-called quality parameters of our variance model will be very important in this training unit, as they will give us an indication of how well our variance model can explain the proportion of the total variance. In this context, we will work in particular with the quality parameter for example, adjusted R-squared value, in order to assess whether our blocked variance model actually has a high model quality. And we will take this opportunity to familiarize ourselves with the useful table Adjustments and evaluations for unusual observations, which provides us with a compact compilation of conspicuous non-descriptive scatter components, known as so-called residuals. In order to be able to assess this residual dispersion graphically, we will get to know the very useful graphic option called, 4-in-1-diagram. We will also use the very helpful factor diagrams, and Tukey’s pairwise comparison test, to specifically identify which factor levels have significant, or non-significant effects on our response variable, overall equipment effectiveness, O.E.E. Based on the corresponding grouping letters and the corresponding so-called graphic tukey simultaneous test of means, we will finally be able to make a 95 % certain recommendation to the management of Smartboard Company, as to which targeted optimization measures should be implemented, depending on the respective product typ.

MAIN TOPICS MINITAB TUTORIAL 13

- Blocked ANOVA, fundamentals

- Interpretation of the quality measures R-sq and R-sq(adj)

- Residual analysis within the framework of the blocked ANOVA

- Fits and diagnostics for unusual observations

- Factor diagrams within the framework of the blocked ANOVA

- tukey simultaneous test of means

- Residual analysis as part of the blocked ANOVA

- Tukey simultaneous test for differences in mean

- Tukey simultaneous 95% confidence interval chart for differences in means